Network improvements at HMCPL

In case you’ve not figured it out yet, we don’t just do books at the Library.

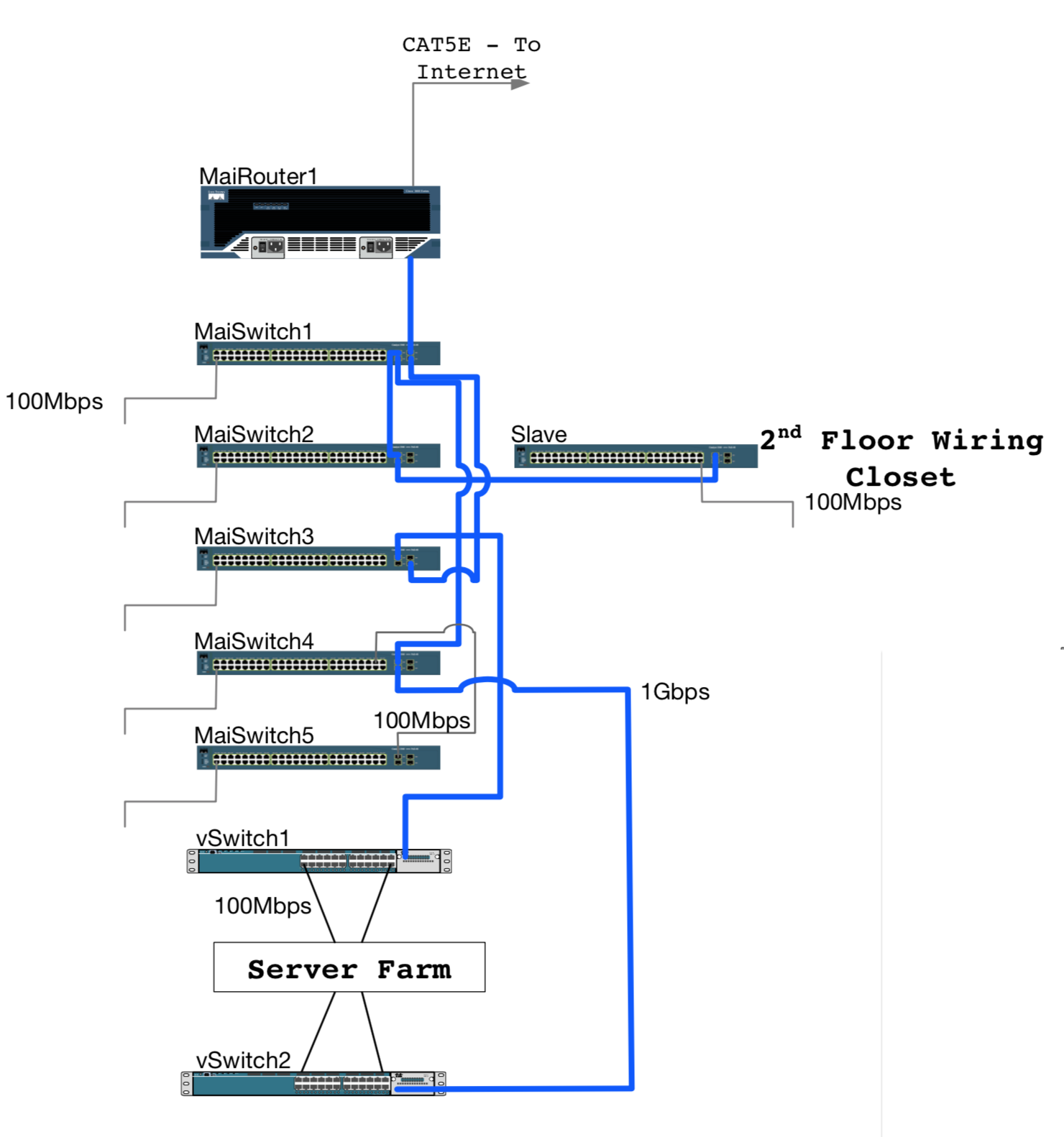

It was about this time of year in 2010, late fall eight years ago when I started working at the Library. At that time, the LibraryNet was all copper — analog T1 lines (think: old-style phone-grade) from our branches, a MetroEthernet for our Internet connection, and all the cabling within our Downtown Branch’s Computer Room were typically 100 megabit per second (Mbps) Ethernet links. Improving this infrastructure was quickly identified as one of my primary goals to improve service in the Library.

Here’s that story.

2010

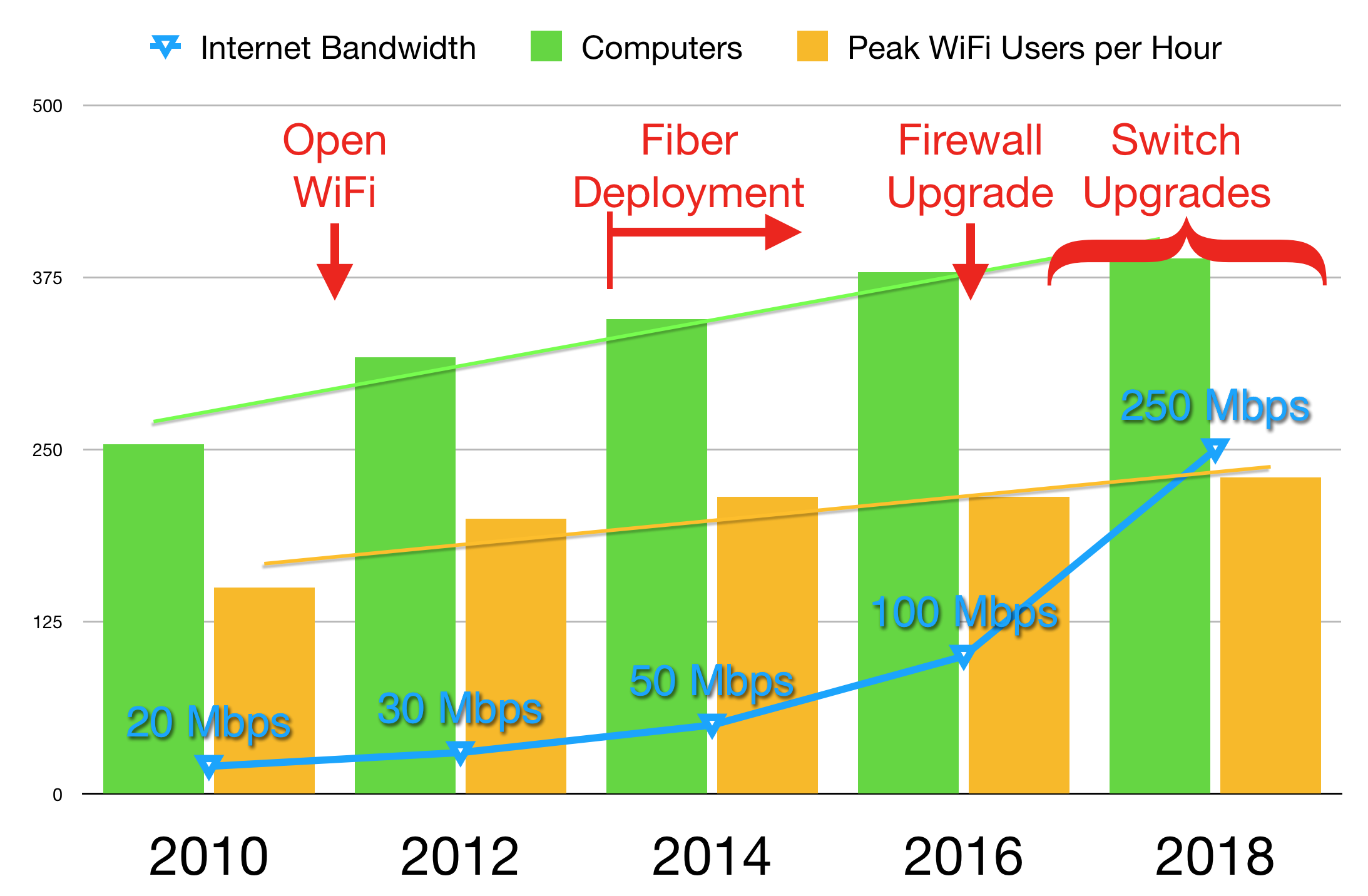

Our Internet connection was 20 Mbps and users had to authenticate on the WiFi network using their library cards. In order to accommodate the number of WiFi users we had, we also continued with a long standing policy to limit bandwidth to end users at a rate that matched the typical 1.5 Mbps connections at our branches. In April of 2011, tornadoes hit large sections of Alabama, including Madison County. We easily decided to remove the requirement for an authenticated login, opening up our network to anyone within range — including those visiting the temporary FEMA site across the street from the Downtown branch, allowing citizens to file applications online. We never even considered reversing that change.

2012

Next year we upgraded our network to 30 Mbps and also started increasing the bandwidth available to WiFi users, to 2.5 Mbps per user, due to the increased bandwidth requirements -- primarily of YouTube videos. Not all branches would benefit from this increase, but it was essential for those locations that had the bandwidth available to them. We could tell when we went too high in the per-user bandwidth limit, because other users would be completely blocked from Internet access and complain even louder. As much as we’d like to, we found there was no way we could remove the per-user bandwidth limit at this time.

2014

We increased our bandwidth to 50 Mbps, and were warned by our Internet Service Provider (ISP) that our firewall could go no further. We were also reaching the limits on our WiFi controller, which couldn’t handle more than 200 users simultaneously. Accordingly, we upgraded the controller to a unit that could handle up to 400 concurrent sessions, while deciding to put the firewall upgrade on hold. During this period, we also started upgrading the network interconnecting our branches to fiber optic connections. Fiber optics provides much higher speed potential, while also allowing us to upgrade a connection’s bandwidth without having to change anything physically — just pay more to our ISP and we get more for you.

2016

We next upgraded our firewall and then our Internet service to 100 Mbps. Now that we were at 100 Mbps, and as other branches started getting faster fiber optic connections, we increased WiFi bandwidth limits to 5 Mbps. We were now regularly averaging 216 users simultaneously on our WiFi controller, with peaks frequently approaching 300.

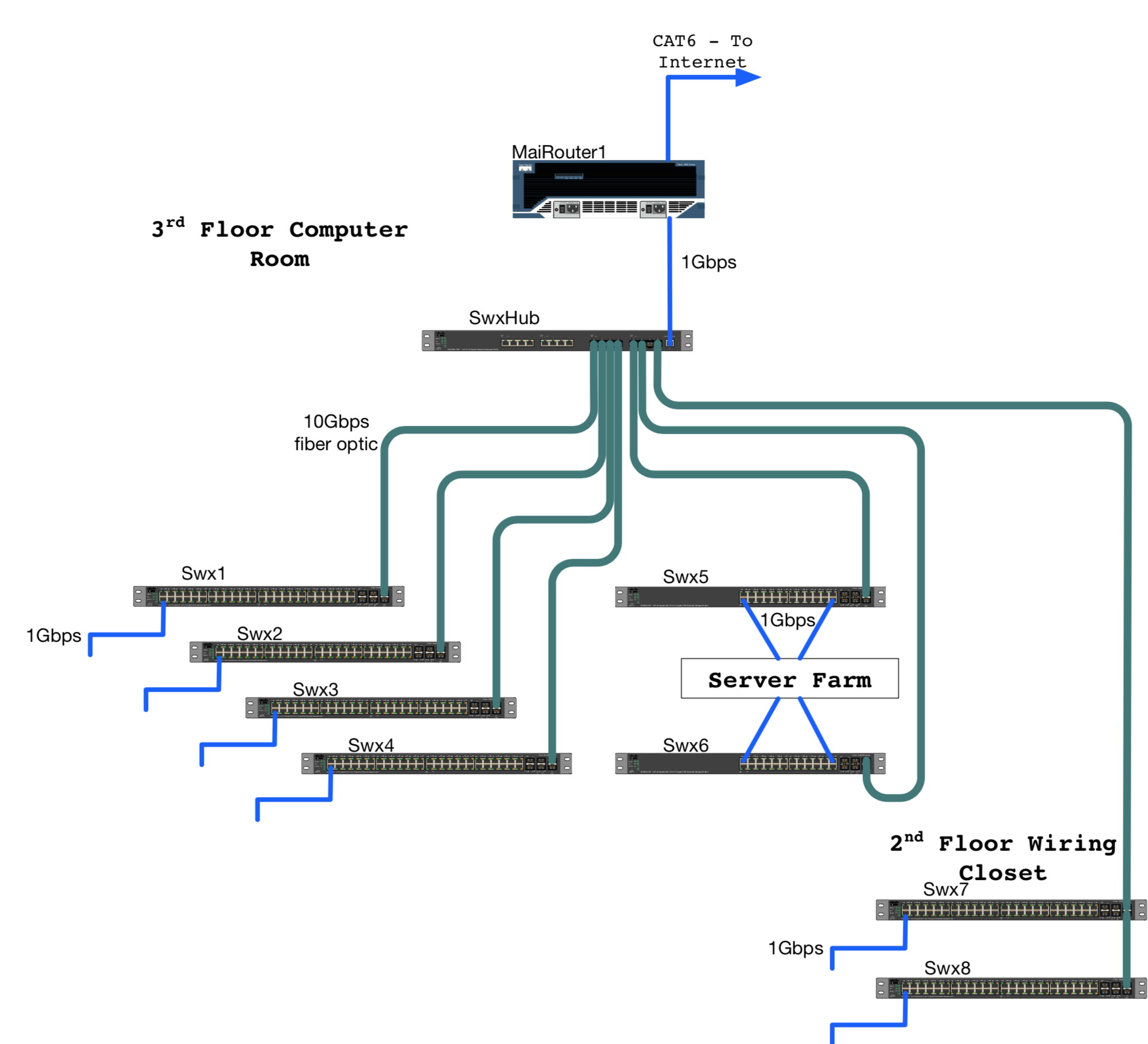

To get ahead of this seemingly never ending race, we needed to move forward with getting all of our branches converted to fiber optic connections. We also needed to upgrade our core switching infrastructure to handle the higher speeds available with the fiber connections as well as the demands of newer WiFi Access Points (WAPs) (the radio transceivers that connect your mobile device to our internal network). We went out to bid to get the hardware needed to upgrade our entire network infrastructure. Our requirements were to get enterprise-grade manageable switches, provide gigabit connections to devices, and interconnect the core switching at Downtown with a 10 gigabit per second (Gbps) fiber optic backbone — 100 times faster than most of our previous connections. This also afforded us the opportunity to replace the hodgepodge connections of our decade’s old legacy switching environment to a more logical and faster "hub-and-spoke" design.

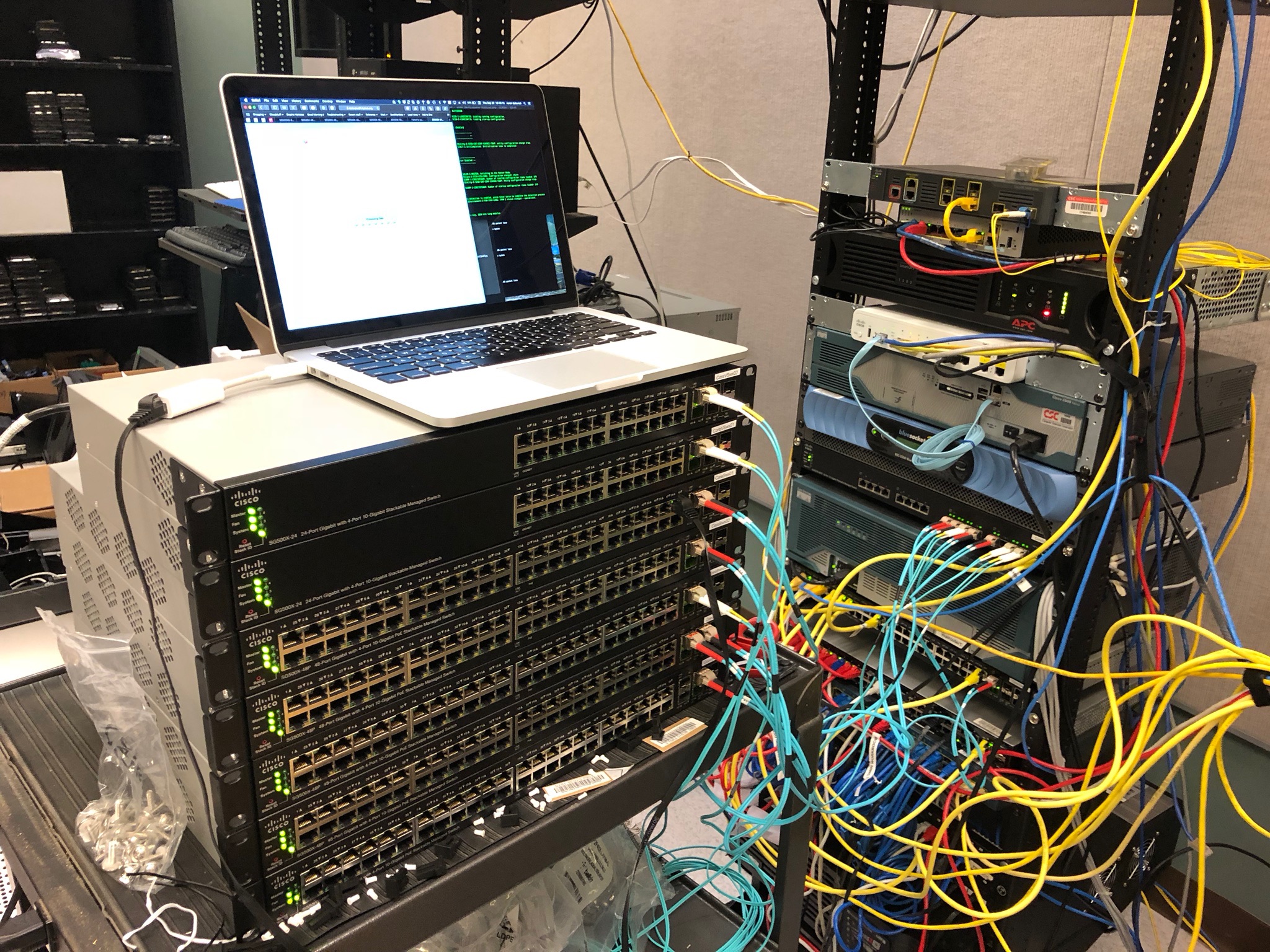

Over the past 2 years, we’ve been implementing the system we purchased. After branches got a fiber optic connection, we installed their new switch. We also upgraded our WAPs to new 802.11n technology, allowing connections in excess of 200 Mbps wirelessly; our busiest WAPs now see 40+ concurrent connections.

Engineering school didn’t teach me that fiber optics could be muddy.

2018

After a month spent upgrading, configuring, and testing, late this September, we closed the entire Library system for one day to install the last batch of switches. The replacement itself was an all-day project, requiring the complete removal of all the old switches and installation of all the new switches, including the two core switches used to drive our main virtualized server farm. Once the new switches were in place and after several days of testing the production environment, we contacted our ISP and signed a contract to upgrade our Internet connection to 250 Mbps. This past week, we got our long-awaited upgrade. As soon as we confirmed the upgrade, we did the things I set out to do 8 years before: remove all of the bandwidth restrictions and the remainder of the protocol filters in place. We now provide unlimited Internet access to you.

Beyond

The next step in our project will be to virtualize our WiFi controller, removing the 400 user limit, as we’re now regularly seeing over 300 users simultaneously on our WiFi network. We will also be installing 802.11ac WAPs with the ability to handle even more users, county-wide.

I hope you can forgive us for taking so long, but getting the infrastructure right was a complex project and far more costly than we could afford to do in one fell swoop. Doing it in the wrong order would benefit no one. On top of the network upgrade, we virtualized our compute environment, built 3 new branch buildings, Triana (2014), Cavalry Hill (2017), and Madison (2018), and are working on two more designs to take you into the future. I’m happy to say, the infrastructure in place now should take HMCPL well into the last half of the coming decade, if not further.